ChatGPT March Madness bracket: prompt pack + audit workflow

To build a ChatGPT March Madness bracket you can defend, treat the model like a rule-following decision assistant, not a prediction engine. Use a short prompt pack that forces a complete bracket, an upset budget, and confidence tiers for every pick. Save the output, then audit it after the tournament so you can tighten your rules for 2027.

Your bracket’s due soon, and you know the feeling: you recognize a few blue-blood programs, you’ve got a vague sense that “12 over 5 happens,” and you still need to submit something that doesn’t crater in the first weekend. The fastest way to stop the chaos is to lock your rules before you pick a single winner; once the rules are set, ChatGPT is useful because it stays consistent across 63 games instead of chasing storylines.

I’ll be honest: the main win here is accountability. You’re not trying to beat a sportsbook model; you’re trying to make picks that match your pool’s scoring and your own risk tolerance. That means you need two things your gut bracket rarely gives you—an explicit risk budget and a way to label uncertainty so you don’t treat a coin flip like a “lock.”

What can ChatGPT do for a March Madness bracket (and what can’t it do)?

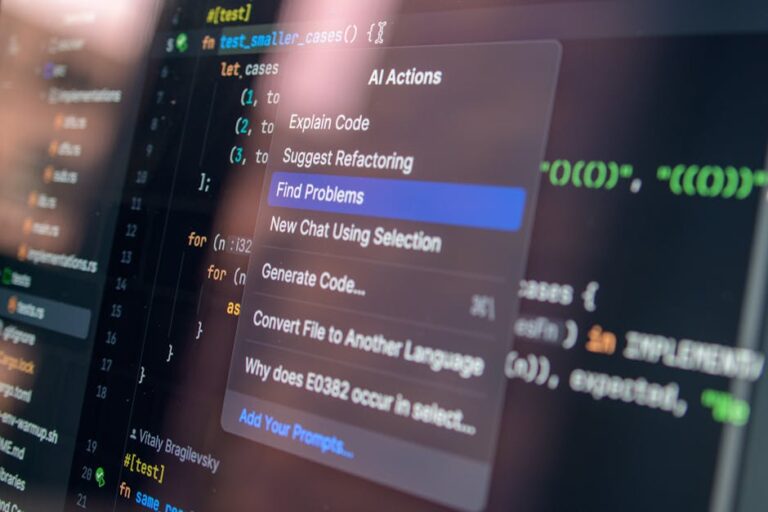

ChatGPT can help you produce a structured bracket that follows your rules across every matchup, round by round. It can’t reliably “know” late-breaking injuries, rotation tweaks, or day-of news unless you supply that information, so treat missing inputs as risk—not as permission to guess.

That limitation shows up in the games people love to get cute with: popular mid-major upset picks, teams with shaky point guards, and squads whose style either travels well or collapses under pressure. If you don’t give the model verified context, it will fill gaps with plausible-sounding narratives; your job is to make it label uncertainty and keep the bracket’s overall shape realistic.

Use the tournament’s official structure as your guardrails. When you paste the official bracket and insist that seeds and matchups are ground truth, you cut down on formatting errors and hallucinated pairings. If you need a quick refresher on the round names and bracket mechanics, the NCAA overview is a clean reference you can point to and copy from: NCAA March Madness bracket.

If you want the simplest, safest approach, use ChatGPT to enforce constraints, not to “predict outcomes.” Skip this method when your pool rules ban outside tools or when you aren’t willing to sanity-check at least the riskiest picks; in that case, a conservative seed-based bracket is less work and usually less drama.

How do you get a full March Madness bracket from ChatGPT without messy outputs?

You get a usable output by demanding completeness, a fixed format, and one-pass execution. Start by telling ChatGPT to produce picks for every matchup, in bracket order, with a consistent line format you can paste into your pool without rewriting.

Write your instructions like a template, not a conversation. Require region-by-region output, every round listed, and a one-sentence reason attached to each pick; also add a constraint that prevents stalling: it can ask you only one clarification question, and only if it truly can’t proceed. That keeps you from losing time to back-and-forth when the deadline is close.

Then paste your bracket source link (or a text version of matchups) and explicitly say it is the “ground truth.” After that, add your pool’s scoring details in plain language, because a bracket that’s fine for a 12-person office pool can be a bad fit for a 300-entry pool that rewards contrarian champion picks.

When you run into model limits or you’re unsure which tier of access you need for longer, structured outputs, use a plan-based lens instead of guessing. The goal is fewer cut-off responses mid-bracket and fewer re-prompts. This breakdown helps you choose based on workflow constraints: model limits by plan and workflow picks.

What is an upset budget, and how do you set one that fits your pool?

An upset budget is a cap on how many underdogs you allow yourself to pick in each round, usually with a seed-difference rule. It keeps your bracket from drifting into fantasy mode where you stack too many double-digit upsets and get wiped out early.

Set the budget around two forces: survival and differentiation. In a small pool, survival matters more because you don’t need to outsmart a crowd; you need to avoid the most common early landmines. In a large pool, you still can’t spray upset picks everywhere, but you may accept a bit more early variance so you aren’t tied with 40 identical chalk brackets by the Sweet 16.

A practical default most people can live with looks like this: in the Round of 64, allow 4 to 6 upsets total across the entire bracket, and focus on moderate seed gaps (think 11 over 6, 10 over 7, 9 over 8). In the Round of 32, tighten to 2 or 3. Past the first weekend, let your bracket drift back toward top seeds unless you have a specific mismatch you can articulate in one sentence.

Concrete example: imagine you’re in an ESPN Tournament Challenge group with 18 entries. You’ll usually do better by limiting yourself to one “headline” upset per region and letting the rest of your differentiation come from a couple of mid-seed teams making a slightly deeper run than the crowd expects. Meanwhile, in a 200-entry pool on CBS Sports, you can keep the same early upset budget but choose a less popular champion path so you aren’t dead if the favorite wins.

How do confidence tiers keep your bracket from imploding by Friday night?

Confidence tiers are labels you assign to every pick so you can see where your bracket is fragile before you submit it. They don’t make picks smarter by magic; they make your risk visible so you can rebalance it while you still have time.

Use three tiers, and keep them behavioral. Tier A means you’d pick it again even after a quick sanity check. Tier B means it’s a reasonable pick but sensitive to one or two variables. Tier C means it’s a coin flip and you’re picking a side for bracket reasons, not because you “know” it’s right.

The key move is a cap. If you stack Tier C picks in one region, you build a bracket that can look clever and still die in one night. A simple rule prevents that: no more than two Tier C picks in the Round of 64 per region. When you spot three or four Tier C picks clustered together, you either swap one back to the favorite or you relocate an upset to a different region where your bracket is too chalky.

Here’s the audit-friendly way to make ChatGPT explain itself without letting it ramble: after it outputs Round of 64 picks, ask it to justify each upset with three bullet points—why the underdog can win once, what breaks for the favorite, and the biggest unknown. You’re not chasing eloquence; you’re looking for unsupported claims. If the explanation leans on vague “momentum,” downgrade the confidence tier and move on.

“Creating helpful, reliable, people-first content is at the heart of what our ranking systems seek to reward.” — Google, Search Central (Creating helpful, reliable, people-first content)

If you want the official context behind that principle, use the source directly: Google’s guidance on creating helpful, reliable, people-first content. The same discipline applies to brackets: show assumptions, label uncertainty, and don’t pretend you have data you don’t have.

What data should you give ChatGPT to improve bracket decisions?

You should give ChatGPT data that can change a pick, not a giant stats dump it will summarize and forget. The most useful inputs are availability notes you can verify, travel or rest context when it’s relevant, and simple style cues that explain how a game could swing.

Build a small “team card” format you can reuse. Keep it short so you can scan it without losing your place: seed and record, offense style (pace and shot profile), defense style (rim protection, turnovers), one verified availability note, and one context factor (travel, altitude, short turnaround). With cards like this, the model is less likely to invent precision and more likely to follow your constraints.

Example workflow: imagine you’re deciding between two trendy upset candidates you saw on social media. You give the model two team cards and tell it to pick only one upset in that pair, inside your budget, with a Tier label; then again, if it can’t justify the pick without leaning on unknowns, it must mark Tier C. In a few minutes you’ll have a bracket that’s still aggressive in a controlled way, rather than a bracket that gambles everywhere.

When you want a faster way to choose the right assistant for this kind of structured, templated output, use the AI tool finder as a quick filter. Don’t treat it as a requirement, though. Your method matters more than the brand name on the chat box.

How do you audit your ChatGPT March Madness bracket after the tournament?

A bracket audit is a simple after-action review that turns “that felt smart” into rules you can reuse next year. You’re measuring decision quality by round and by confidence tier, not trying to rewrite history based on one bad bounce.

Keep the audit boring and consistent so you can compare 2026 to 2027 without moving the goalposts. Track accuracy by round, upset hit rate, and tier calibration (Tier A hit rate, Tier B hit rate, Tier C hit rate). Plus, add one more column that people skip and later regret: a change log for manual overrides you made after ChatGPT’s output, with a short reason.

The expected result is clarity about where your bracket strategy is too loose. If your Tier C picks perform like coin flips, that’s not a surprise; it’s a sign you should reduce how many Tier C picks you allow per region. Still, if your upset budget did fine in Round of 64 but you got crushed in Round of 32, your budget may be fine and your late-weekend “cute picks” may be the real problem.

Save your prompt pack and your audit sheet in the same folder so you can reuse them on Selection Sunday. If you want a ready-made reference to compare against when you build your next version, keep a link to your own workflow notes or revisit a deeper walk-through of the same concept: a prompt pack and audit workflow for bracket picks.

Lock the rules first: copy your bracket into a fixed-format prompt, set an upset budget by round, force confidence tiers, and cap low-confidence picks per region before you submit. Save the output, then start a simple audit sheet now (even if you fill it in later) so next year’s bracket is based on what held up, not vibes.

FAQ

Can ChatGPT predict March Madness winners accurately?

ChatGPT isn’t a sportsbook-grade prediction model, and it won’t automatically know late-breaking news unless you provide it. It’s most useful for applying consistent rules, producing a complete bracket format, and labeling uncertainty so you can control risk.

What’s a safe upset budget for a small office pool?

A practical baseline is 4 to 6 Round of 64 upsets across the whole bracket, then 2 to 3 in the Round of 32, with mostly higher seeds after the first weekend. Small pools reward survival more than extreme differentiation.

How do you stop an AI bracket from stacking too many longshot upsets in one region?

Use confidence tiers and a cap, such as no more than two Tier C picks per region in the Round of 64. If a region has three or more low-confidence picks, swap at least one back to the favorite or move risk elsewhere.

What should you do when you can’t verify an injury or availability note?

Treat missing verification as added risk and downgrade the pick’s confidence tier. If that creates too many low-confidence picks in a region, choose the favorite and use your upset budget on a game you can justify cleanly.